Not All Eye Tracking Is Created Equal

In today’s high-tech world — in which people think nothing of engaging in discussions with their computer via Siri or accessing apps while walking the streets via Google Glass — it’s hard to imagine the level of resistance and skepticism that Perception Research Services (PRS) faced when we introduced the use of eye-tracking technology for marketing research back in the early 1970s. At that time, eye tracking — which documents precisely where people are looking, based on corneal reflections — was used primarily for military, medical and scientific applications.

Since that time, eye tracking has become a widely accepted and validated technique in the marketing research industry. In fact, researchers, marketers and even designers have not only acknowledged the value of eye tracking, they have also embraced its use in determining how well their marketing materials:

- Break through clutter and gain consideration (e.g., Do shoppers even see a package within a cluttered shelf?)

- Hold attention and highlight key marketing messages (e.g., Do shoppers engage with specific on-pack elements /claims?)

Given the widespread use and acknowledged value of eye tracking, it’s not surprising that companies would offer cheaper alternatives. Two such offerings have recently been promoted as substitutes for eye tracking:

- A mouse-clicking exercise conducted via a computer-based interview (“click on what you saw”)

- A software algorithm intended to predict visual attention without actual consumer research (Visual Attention Service)

Importantly, while some people mistakenly think of these as alternative eye-tracking techniques — because they both supposedly provide indications of visibility — in fact, neither of these is any sort of eye tracking at all.

Nevertheless, we recently conducted parallel research to understand the validity of these approaches, by comparing their outputs directly to those generated using actual eye tracking (via in-person interviews).

What we found was quite clear: Neither approach provides an accurate substitute for eye tracking — and the conclusions derived from these methods can be quite misleading.

“CLICK ON WHAT YOU SAW”

To gauge this methodology, PRS fielded a series of parallel packaging studies:

- In each study, one set of 150 shoppers went through a PRS Eye-Tracking exercise (recording what was actually seen, as it occurred, while viewing a series of cluttered product categories at the shelf and individual packages) conducted via in-person interviews in multiple central location facilities across the U.S.

- A matched set of 150 shoppers, with similar demographics and brand usage, saw the identical set of shelves and pack images via the Web and were asked to “click on the first three things that catch your attention” within each visual.

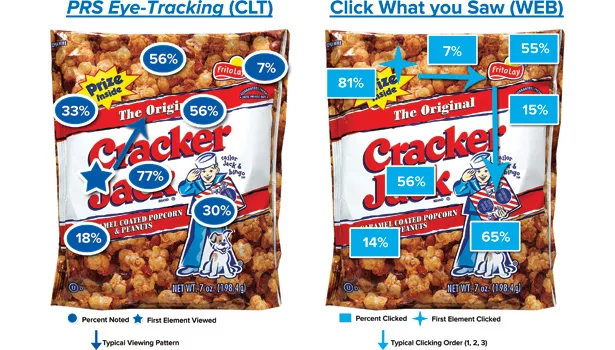

As the Cracker Jack example (right) illustrates, the data varied dramatically between the two techniques:

While 81 percent of Web respondents clicked on the “Prize Inside” burst (as one of the first three things they “saw”), PRS Eye-Tracking revealed that only 33 percent of shoppers visually noted that element as they viewed the package.

Thus, the clicking method dramatically overstated the actual visibility of this claim.

Visibility levels varied widely between methodologies for several other packaging elements, including the background popcorn (56 percent noting via PRS Eye-Tracking versus 7 percent clicking) and the Jack and Bingo visual (30 percent noting versus 65 percent). In addition, the primary viewing pattern, represented by the arrows, also differed noticeably between the two methods, with varying start points (branding versus burst) and flows.

In addition to comparing the outcomes on an absolute basis for a given package, we also assessed the shifts that each technique demonstrated between the control (current) and test (proposed) package.

Specifically, we analyzed whether the approaches would lead to similar conclusions on whether the test design was increasing or decreasing the visibility of specific design elements (the “Prize Inside” claim, the main visual, etc.). This is particularly important because these findings are critical in driving recommendations for design refinements (such as enhancing readability of the flavor name).

In the Cracker Jack example, the findings from the two methods were dramatically different:

- PRS Eye-Tracking revealed that the test packaging increased the visibility of two of the five primary packaging elements, while decreasing the visibility of three other elements. For example, PRS Eye-Tracking showed that the visibility of the “Prize Inside” flag was significantly higher in the test design (versus the control).

- The clicking method reached the opposite conclusion on four of the five design elements. For example, the clicking method suggested the “Prize Inside” flag had significantly lower visibility in the test design (versus the control).

Similar patterns were observed across many of the cases in which we ran parallel tests, on both shelf visibility and pack-viewing patterns.

This suggests that relying on the click method will likely result in proposing very different types of design refinements than if using noting scores from actual eye tracking.

What do we believe is driving the significant differences between the methodologies?

Across packages, the clicking data is higher on more cognitive, and perhaps more compelling, elements of the pack such as the prize on Cracker Jack, while it is lower than PRS Eye-Tracking on more mundane, and perhaps less unique or compelling, elements such as the popcorn.

Perhaps, rather than clicking on what they first saw, shoppers were actually clicking on what most interested them. This hypothesis makes intuitive sense, given that the clicking exercise requires a conscious thought process, while PRS Eye-Tracking is measuring an involuntary, physiological activity (actual eye fixations).

While the clicking exercise may offer value as part of a research study, if used/interpreted properly (e.g. “Click on what you liked.”), it is clear from this data that this technique should not be utilized as a substitute for eye tracking, as it does not accurately document what people actually see or miss.

VISUAL ATTENTION SERVICE (VAS)

The VAS service is a software algorithm that is used to predict eye-tracking results. No actual consumer research is conducted: One simply uploads an image (of a shelf, a package, etc.), and the software calculates the visibility of different elements within the image.

To assess this technique, PRS loaded 20 images (of 10 shelves and 10 packs) into the VAS software and compared the results to those gathered from PRS Eye-Tracking of the same images (conducted in central location studies, among an in-person sample of 150 shoppers).

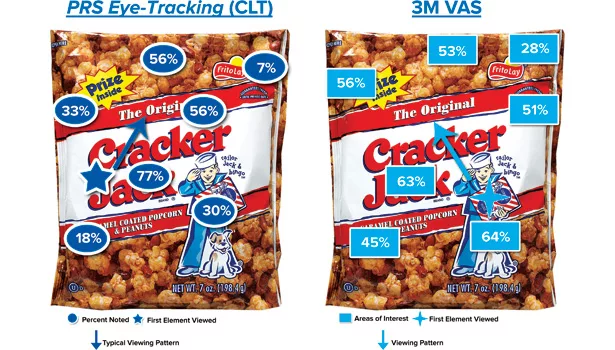

Shown (above) is the same Cracker Jack package, with the PRS Eye-Tracking findings compared to the VAS results. As the illustration reveals, the VAS results showed far less differentiation among the packaging elements, as most results were in the neighborhood of 50 percent — and it dramatically overestimated the visibility of the “Prize Inside” claim (56 percent versus 33 percent from actual eye tracking).

More importantly, the VAS system did not demonstrate differences between the control and test packaging (not shown due to confidentiality). While PRS Eye-Tracking revealed significant differences between control and test in the visibility of all five primary design elements, the VAS system predicted no differences.

These two primary themes from the Cracker Jack case were repeated across multiple examples:

- The VAS system was particularly poor in accurately predicting the visibility levels of on-pack claims and messaging, as it frequently projected higher visibility levels than documented via PRS Eye-Tracking.

- The VAS system did not appear to be very sensitive to detecting differences across design systems.

In fact, we found that over half of the research conclusions would have been different using the VAS system as opposed to PRS Eye-Tracking.

Again, this suggests that relying on the VAS technique will likely result in proposing very different types of design refinements than if using noting scores from actual eye tracking.

What do we believe is driving the significant differences between the methodologies?

While we were able to develop a hypothesis on the clicking data as to why it differed from the eye tracking — the fact that it’s a more cognitive exercise — there seemed to be little predictability with the VAS software. Simply put, the algorithm did not appear to be sufficiently sensitive or accurate enough to correctly predict actual shopper eye movement in the context of complex shelves and/or packages.

CONCLUSIONS AND IMPLICATIONS

This parallel research shows that neither “click on what you saw” nor VAS are credible, accurate replacements for actual eye tracking, as they:

- Generate inaccurate data on an absolute level (percent noting specific design elements)

- Lead to different research conclusions regarding the impact of new packaging (on-shelf visibility and the visibility of on-pack claims)

While there may be other valuable uses for these tools, they should not be considered substitutes for quantitative eye tracking.

Simply put, if the objective is to learn what shoppers see and miss, there is no valid substitute for actual in-person eye tracking.

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!